Agentic AI is rewriting what it means to manage. CHROs now face a new mandate to protect quality and accountability.

- Gartner client? Log in for personalized search results.

Agentic AI is redefining what it means to manage

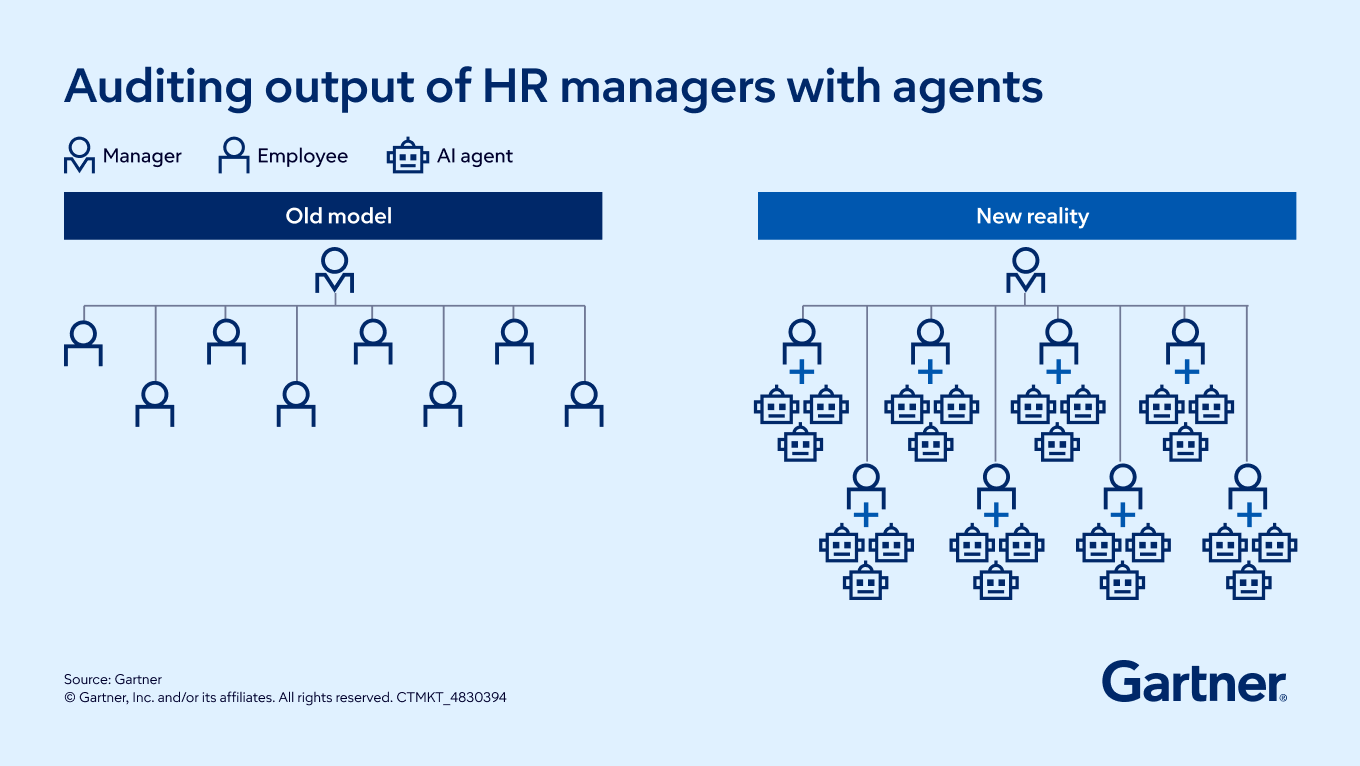

Agentic AI is fundamentally changing managerial responsibility. Managers are no longer overseeing only people and tasks; they are increasingly accountable for the behavior, outputs and risks of autonomous digital agents operating alongside their teams. This shift challenges long‑held assumptions that AI will automatically simplify management or allow organizations to safely expand spans of control.

In practice, managers must now verify inputs — such as objectives, rules and constraints — while continuously evaluating outputs like workflow execution and task completion. Instead of reducing managerial effort, agentic AI often increases cognitive load. Seventy‑five percent of CHROs report that managers are more overwhelmed than ever, underscoring that AI‑driven productivity gains come with hidden oversight costs.

Why agentic AI forces a shift from control to complexity

To safely scale agentic AI, CHROs must help organizations move beyond traditional spans of control and adopt a span‑of‑complexity mindset. Complexity is no longer driven by headcount alone, but by how many agents are operating, how autonomous they are and how tightly their outputs are coupled to business risk. This shift defines the next phase of manager oversight.

Audit spans of complexity, not just headcount

Before adjusting manager headcount or scope, assess the density and autonomy of AI agents embedded within each team. A manager overseeing eight employees who each rely on multiple autonomous agents faces materially greater complexity than raw headcount suggests. Ignoring this reality increases the risk of operational failure as agents scale faster than oversight models.

Establish clear human-in-the-loop protocols

Agentic systems require predefined intervention points. CHROs should partner with IT and business leaders to establish human‑in‑the‑loop thresholds — such as when an agent’s confidence score falls below an acceptable level.

Tasks should also be classified by risk and required human agency: advisory, assistive, assumptive or autonomous. HR must clearly define where human judgment, empathy or discretion is legally, culturally or ethically non‑negotiable.

Upskill managers for exception handling and AgentOps

Manager development must move beyond routine task execution. As agentic AI scales, managers increasingly act as auditors, troubleshooters and escalation points — a discipline often referred to as AgentOps.

Training should focus on evaluating escalated agent outputs, correcting edge cases and documenting rationale so systems improve over time. Without these skills, exceptions quickly become a new source of administrative burnout rather than a lever for learning.

Redesign manager role expectations for AI

Manager role profiles must formally recognize AI oversight and escalation management as core responsibilities. Without explicitly allocating time and authority for managers to act as the human in the loop, agentic AI risks overwhelming already stretched leaders.

One in four managers says they would opt out of their role if given the chance — a significant increase from the prior year — highlighting the urgency for CHROs to stabilize and modernize the manager experience.

Action steps for CHROs

Here’s where to start:

Assess manager workload using a span-of-complexity audit — don’t just count heads.

Automate routine managerial tasks where possible, but keep human accountability for high-risk decisions.

Redesign manager role expectations to formally include AI oversight and exception handling.

Upskill managers for AgentOps, focusing on auditing, monitoring and escalation management.

Partner with IT to set clear human-in-the-loop protocols for agentic AI interventions.

Ensure HR leads on where human judgment, empathy or discretion is non-negotiable.

Collaborate with IT and business leaders to classify tasks by risk and define the right level of human involvement — protect critical decisions from being delegated to agents alone, and keep compliance and culture intact.

FAQs on agentic AI for CHROs

How will agentic AI impact manager spans of control?

Agentic AI changes the equation. Instead of widening spans based on headcount, you need to audit the complexity and autonomy of digital agents. Gartner studies show that organizations increasing spans of control without considering agentic complexity risk operational failures and will likely need to rehire middle managers to restore oversight and quality.

What new skills do managers need with agentic AI?

Managers must shift from routine task execution to auditing, deploying and maintaining AI agents — known as AgentOps. They need to handle escalations, correct edge cases and log rationales so systems improve. Upskilling in exception handling and AI troubleshooting is now essential for effective management.

What protocols should CHROs establish for agentic AI oversight?

CHROs should partner with IT to set human-in-the-loop protocols for when agent confidence drops below thresholds. Tasks must be categorized by risk, with HR dictating where human empathy or judgment is required. This protects quality, compliance and culture as agentic AI scales.

Attend a Conference

Join Gartner experts and your peers to accelerate growth

Gather alongside your peers in Orlando to gain insights on emerging trends, receive one-on-one guidance from a Gartner expert and create a strategy to tackle your priorities head-on.

Gartner HR Symposium/Xpo™

Orlando, FL

Drive stronger performance on your mission-critical priorities.